I wanted to do a test run of VMware vSphere 6 but only operating as an end-user, and I wanted to do it using vCloud Director (no longer available to end-users, just service providers).

This took some effort but really wasn’t too bad. Trying to do vCenter Server Appliance was a bust, though, as I don’t have direct access to the vCloud Director infrastructure and I really didn’t want to use some of my precious resources within the nested ESXi systems for a management application. So I took the easy way out and just used Windows Server 2012 R2.

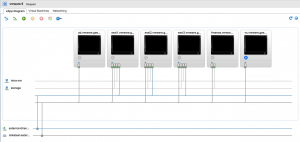

This is 1 A/D controller, 1 vCenter, 1 FreeNAS file server, and 3 ESXi hypervisors. Total time to put this together not including the VCSA battles was about 3 hours. The VCSA items was a lot more hours even with the help of my vendor who tried to do a deploy for me and import into my virtual data center (it just didn’t work as expected with weird networking items and unable to change the IP address).

There are 4 networks in place:

-

miketest-internal – NAT to public networks firewall for management (A/D and vCenter)

storage – internal vApp network – ESXi mounts NFS storage (no internet access) (ESXi and FreeNAS)

intra-vm – internal vApp network – virtual machines can talk privately (no internet access) (VMs)

external-direct-130 – NAT to public networks firewall for application serving (VMs)

I installed FreeBSD 10.2-RELEASE and it worked just fine. Did a make buildworld && make installworld for fun and it progressed along quite well, not as fast as doing it without double virtualization and/or directly on my trashcan.

I took some screenshots at the end of my run so the nested VMware infrastructure was shutdown.

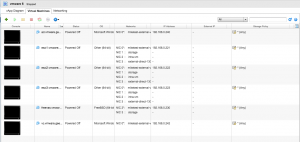

And last – configuration of the VMs used for this experiment.

-

ad.vmware.geeks.org – 2 GiB RAM, 1 vCPU, 20 GiB storage

esxi{1,2,3}.vmware.geeks.org – 8 GiB RAM, 2 vCPU, 20 GiB storage

freenas.vmware.geeks.org – 8 GiB RAM, 2 vCPU, 40 GiB boot, 6×200 GiB RAID10 ZFS

vc.vmware.geeks.org – 10 GiB RAM, 2 vCPU, 120 GiB storage

Except for the FreeNAS system the VMs were all configured to use VMXNET3 for networking interfaces.

All systems used the LSI SAS SCSI adapter.